Table of Contents

Lori Grunin/CNET

Are you baffled by the multitude of laptop, desktop and tablet options being hurled at you as a generic “creative” or “creator”? Marketing materials rarely distinguish among the widely varying needs for different pursuits; marketers basically consider anything with a discrete GPU (a graphics processor that’s not integrated into the CPU), no matter how low power, suitable for all sorts of creative endeavors. That can get really frustrating when you’re trying to wade through a mountain of choices.

On one hand, the wealth of options means there’s something for every type of work, suitable for any creative tool and at a multitude of prices. On the other, it means you run the risk of overspending for a model you don’t really need. Or more likely underspending, and ending up with a system that just can’t keep up, because you haven’t judged the trade-offs of different components properly.

One thing hasn’t changed over time: The most important components to worry about are the CPU, which generally handles most of the final quality and AI acceleration for a growing number of smart features; GPU, which determines how fluidly your screen interactions are along with some AI acceleration as well; the screen; and the amount of memory. Other considerations can be your network speed and stability, since so much is moving up and down from the cloud, and storage speed and capacity if you’re dealing with large video or render files.

You still won’t find anything particularly budget-worthy for a decent experience. Even a basic model worth buying will cost at least $1,000; like a gaming laptop, the extras that make it worth the name are what differentiates it from a general-purpose competitor, and those always cost at least a bit extra.

How do I figure out what I need?

Check your software requirements

In order to use some advanced features, accelerate some operations or adhere to certain security constraints, some professional applications require workstation-class components: Nvidia A- or T-series or AMD W-series GPUs rather than their GeForce or Radeon equivalents, Intel Xeon or AMD Threadripper CPUs and ECC (error correction code) memory.

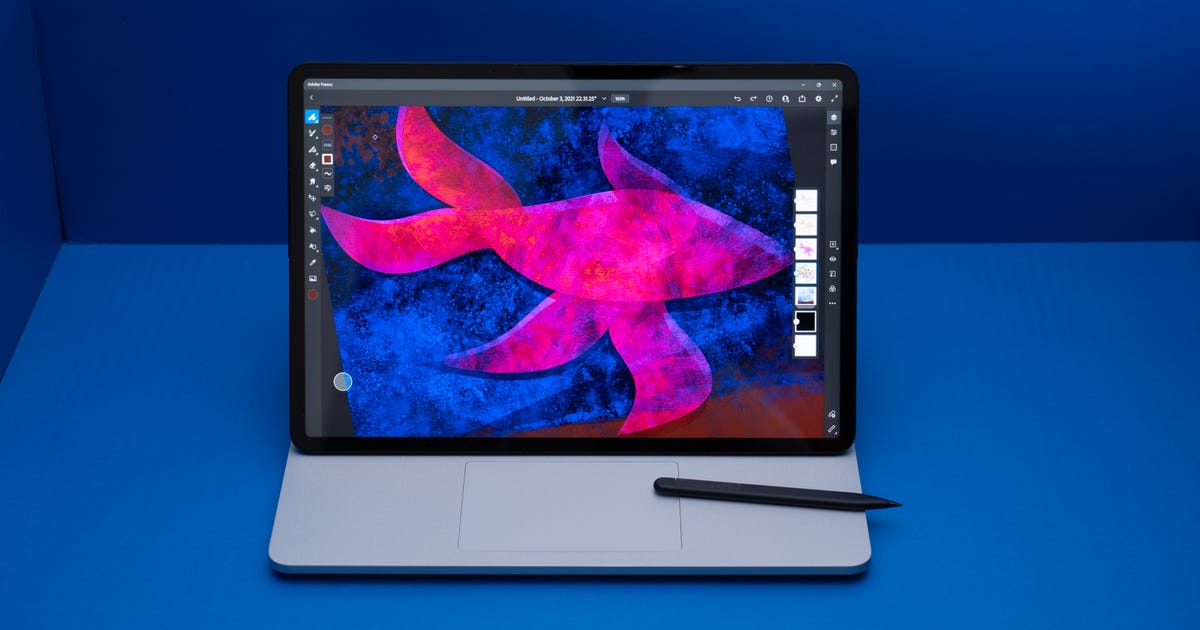

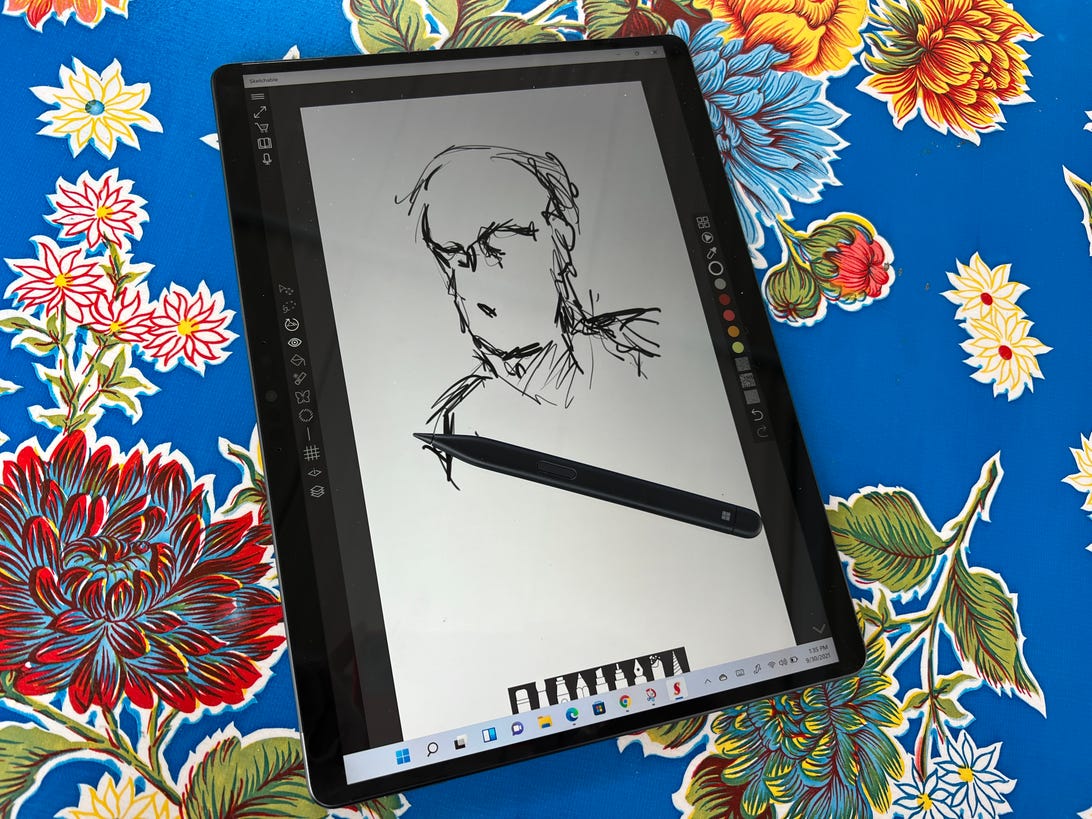

Andrew Hoyle/CNET

Nvidia loosened the reigns on its division between its consumer GPUs and its workstation GPUs with a middle-ground Nvidia Studio. The Studio drivers, as opposed to GeForce’s Game Ready ones, add optimizations for more creation-focused applications rather than games, which means you don’t necessarily have to fork over as much cash.

MacBook Pros now have native M1 processor support for most of the important applications, which includes software written to use Metal (Apple’s graphics application programming interface). But a lot of software still doesn’t have both Windows and MacOS versions, which means you have to pick the platform that supports any critical utilities or specific software packages. If you need both and aren’t seriously budget-constrained, consider buying a fully kitted-out MacBook Pro and running a Windows virtual machine on it. That’s an imperfect solution, though.

Base the specs on the application you spend the most time in

Companies with professional applications usually provide guidance on what some recommended specs are for running their software. If your budget demands that you make performance trade-offs, you need to know what to throw more money at. Since every application is different, you can’t generalize to the level of “video-editing uses CPU cores more than GPU acceleration” (though a big, fast SSD is almost always a good idea). The requirements for photo editing are generally lower than those for video, so those systems will probably be cheaper and more tempting. But if you spend 90% of your time editing video, it might not be worth the savings.

Dan Ackerman/CNET

There are a few generalizations I can make to help narrow down your options:

- More and faster CPU cores — more P-Cores if we’re talking about Intel’s new 12th-gen processors — directly translate into shorter final-quality rendering times for both video and 3D and faster ingestion and thumbnail generation of high-resolution photos and video. Intel’s new P-series processors are specifically biased for creative (and other CPU-intensive) work.

- More and faster GPU cores plus more graphics memory (VRAM) improves the fluidity of much real-time work, such as using the secondary display option in Lightroom, scrubbing through complex timelines for video editing, working on complex 3D models and so on.

- Always get 16GB or more memory. Frankly, that’s my general recommendation for Windows systems (MacOS runs better on less memory than Windows). But a lot of graphics applications will use as much memory as they can get their grubby little bits on; for instance, I’ve never seen Lightroom use less than all the available memory in my system (or CPU cores) when importing photos.

- Stick with SSD storage and at least 1TB of it. Budget laptops may have a slow, secondary spinning disk drive to cheaply pad about the amount of storage. And while you could get away with 512GB, you’ll probably find yourself having to clear files off onto external storage a little too frequently.

- Get the fastest Wi-Fi possible, which at the moment is Wi-Fi 6E. Much has become split between the cloud and local storage, and even if you don’t intend to use the cloud much your software may force it on you. For instance, Adobe really, really wants you to use its clouds and is moving an increasing amount of your files to cloud-only. And if you accidentally save that 256MB Photoshop file in the ether, you’re in for a rude awakening when you try to open it next.

Do I need a 4K or 100% Adobe RGB screen?

Not necessarily. For highly detailed work — think a CAD wireframe or illustration — you might benefit from the higher pixel density of a 4K display, but for the most part, you can get away with something lower (and you’ll be rewarded with slightly better battery life, too).

Color is more important, but your needs depend on what you’re doing and at what level. A lot of manufacturers will cut corners with a 100% sRGB display, but it won’t be able to reproduce a lot of saturated colors; it really is a least-common-denominator space, and you can always buy a cheap external monitor to preview or proof images the way they’ll appear on cheaper displays.

For graphics that will only be appearing online, a screen with at least 95% P3 (aka DCI-P3) coverage is my general choice, and they’re becoming quite common and less expensive than they used to be. If you’re trying to match colors between print and screen, then 99% Adobe RGB makes more sense. Either one will display lovely saturated colors and the broad tonal range you might need for photo editing, but Adobe RGB skews more toward reproducing cyan and magenta, which are important for printing.

A display that supports color profiles stored in hardware, like HP’s Dreamcolor, Calman Ready, Dell PremierColor and so on, will allow for more consistent color when you use multiple calibrated monitors. They also tend to be better, as calibration requires a tighter color error tolerance than typical screens. Of course, they also tend to be more expensive. And you frequently need to step up to a mobile workstation for this type of capability; you can use hardware calibrators such as the Calibrite ColorChecker Display (formerly the X-Rite i1Display Pro) to generate software profiles, but they’re more difficult to work with when matching colors across multiple connected monitors.

https://www.cnet.com/tech/computing/how-to-buy-a-laptop-to-edit-photos-videos-or-other-creative-tasks/